In recent weeks, we’ve been walking through the AVL signal chain, looking closer at the different parts of the technology that goes into an audio, video and lighting system, following the signal chain through source, processing, distribution, output and control. So far, we’ve looked at source devices, which include live capture devices, such as cameras and microphones, as well as “play” devices that either live-generate audio/video signals or playback audio/video recordings.

Today, we’re moving on to the processing phase. This is an important step in the process, and the one that is most likely to be overlooked when people think about the AVL system. Perhaps this is because people typically think of audio and video systems as the technology involved with getting the source to the destination. However, an effective AVL system also ensures that everything looks and sounds great, which often requires some processing of the audio and video signals in the installation.

For much of the AVL system, the audio, video and lighting signal paths are intermingled, with audio and video coming together and splitting apart, video flowing into LED lighting, and the audio, video and lighting controlled, using an interconnected control platform. However, at the processing phase, the pathways are separated, with distinct audio, video and lighting processing. This is because each type of signal requires a unique type of processing. Audio processing accounts for differences in sound and adds a variety of effects and modifications to ensure optimal sound quality. Video processing, on the other hand, modifies the video signal to ensure video is as close to the source signal as possible while optimizing for the display. Finally, lighting processing, an aspect of an LED Video lighting system, breaks a video signal down and maps pixels from the video to individual LED diodes in various LED video fixtures in an installation. Because each of these are so different, we will look at each type of processing separately, starting with audio processing.

Audio processing—called Digital Signal Processing (or DSP)—can be found in a variety of places within the signal chain. It can be found in a mixer, such as soundboard, where there are adjustments to the individual audio channels on the board, as well as built-in digital rack effects and master channel effects. It can also be found in a dedicated hardware digital signal processor (such as a BSS Soundweb London DSP) or as technology that is built into another device, such as a Crown Amplifier, JBL portable speaker or AMX Enova video switcher.

There are many different types of audio effects, but most can be grouped into a few general categories, based upon the basic technology behind the effect. These effects include frequency effects, like equalization; dynamic effects, such as limiters and gates; delay effects, like reverb and delay; audio conferencing effects, such as echo cancellation; and phasing and other special effects.

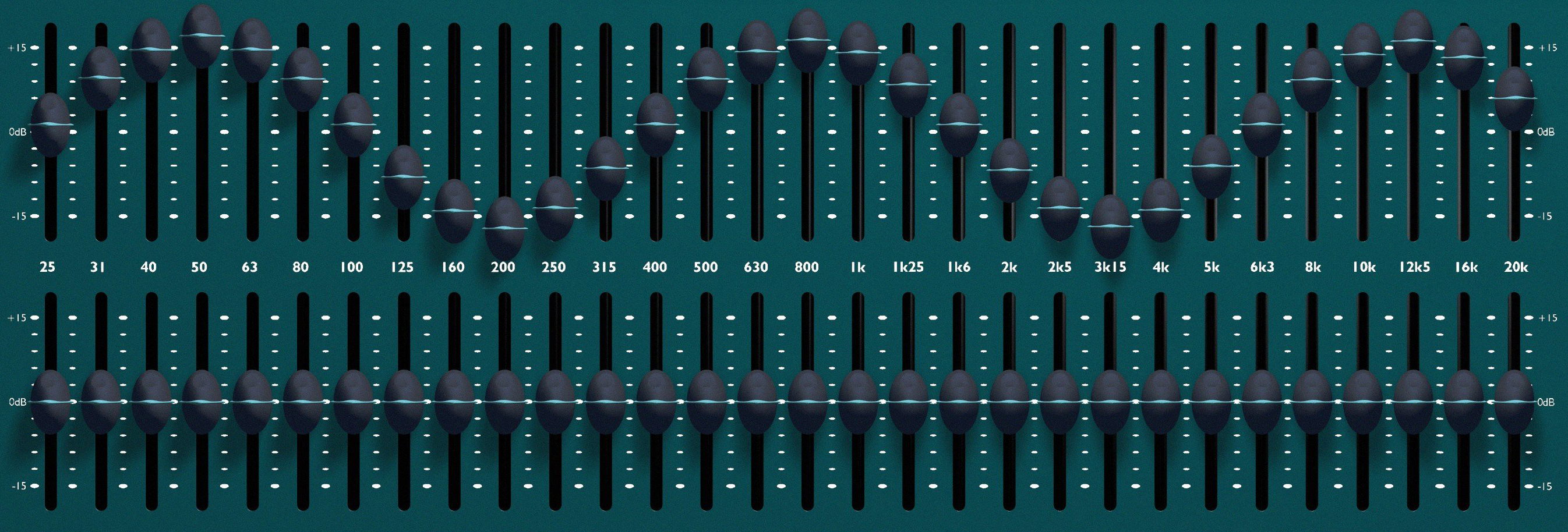

Equalization

Equalization (or “EQ”) is the attenuation (the turning up or down) of different audio frequencies to optimize the sound of the audio signal. This optimization often occurs at multiple points in the signal chain (as we’ve discussed before). Different EQs optimize different parts of the signal chain, and what they adjust depends on what the EQ is trying to do. For example, channel EQ on a soundboard adjusts the sound profile of a single audio source coming into a mixer and accounts for offending frequencies of the sound source, while turning up pleasant frequencies that should be accented. Channel EQ also turns down frequencies not needed in the original source (frequencies under 100 Hz, for example, are typically not needed in speech and are rolled off, using a low cut). Other EQs have other uses. An EQ on the mixed output from a soundboard tweaks the overall sound profile, while a “room EQ” sets the overall sound profile of the room. There are even speaker EQs that account for the unique physicality of a particular speaker cabinet. These are typically found in DSP-enabled amplifiers, like the Crown amplifiers, and settings for different speakers are typically already provided by the manufacturer.

Equalization (or “EQ”) is the attenuation (the turning up or down) of different audio frequencies to optimize the sound of the audio signal. This optimization often occurs at multiple points in the signal chain (as we’ve discussed before). Different EQs optimize different parts of the signal chain, and what they adjust depends on what the EQ is trying to do. For example, channel EQ on a soundboard adjusts the sound profile of a single audio source coming into a mixer and accounts for offending frequencies of the sound source, while turning up pleasant frequencies that should be accented. Channel EQ also turns down frequencies not needed in the original source (frequencies under 100 Hz, for example, are typically not needed in speech and are rolled off, using a low cut). Other EQs have other uses. An EQ on the mixed output from a soundboard tweaks the overall sound profile, while a “room EQ” sets the overall sound profile of the room. There are even speaker EQs that account for the unique physicality of a particular speaker cabinet. These are typically found in DSP-enabled amplifiers, like the Crown amplifiers, and settings for different speakers are typically already provided by the manufacturer.

Dynamics

While frequency effects like equalization are designed to optimize the sound profile, dynamic effects are used to optimize the audio’s volume level. Sure, there are many opportunities to manually adjust the volume or gain in the signal chain (indeed, there is a whole discipline called “gain structure” that deals with setting these various volumes). However, it is impractical or even impossible to account for every volume variance that happens in a system, regardless of whether or not there is a live operator. Dynamic effects monitor the volume and adjust it automatically based on different variables. If done right, a normal person won’t be able to hear the impact of the effect. It will simply sound smooth, with no unnatural bursts of volume.

While frequency effects like equalization are designed to optimize the sound profile, dynamic effects are used to optimize the audio’s volume level. Sure, there are many opportunities to manually adjust the volume or gain in the signal chain (indeed, there is a whole discipline called “gain structure” that deals with setting these various volumes). However, it is impractical or even impossible to account for every volume variance that happens in a system, regardless of whether or not there is a live operator. Dynamic effects monitor the volume and adjust it automatically based on different variables. If done right, a normal person won’t be able to hear the impact of the effect. It will simply sound smooth, with no unnatural bursts of volume.

While there are a variety of different dynamic effects with different names and functionalities, the basic concept of how the technology works is essentially the same. Dynamic effects adjust volume based upon a volume limit (called a “threshold”) that the user sets. When the volume crosses the threshold, the effect adjusts the volume based upon the type of effect and its settings.

A limiter, for example, uses the threshold as a ceiling. A threshold of -5 dB will turn all volume above -5 dB down to that volume. These effects are sometimes called “Hard Limiters,” because they are a hard limit—the sound doesn’t get louder than the threshold. Compressors work similar to limiters, except rather than a hard limit, the ceiling is “softer”—the volume is turned down, but by a certain percentage, rather than turned down all the way to the limit. This can sound more natural, which is why compressors are used more commonly, with limiters used to control the final output of a mixed signal. Other dynamic effects include gates and expanders, which work similar to limiters and compressors respectively, only in reverse. In these cases, the threshold acts like a “floor,” with the effect turning down any sound that drops below the threshold. This eliminates buzzing and other noise that may be in a system.

A limiter, for example, uses the threshold as a ceiling. A threshold of -5 dB will turn all volume above -5 dB down to that volume. These effects are sometimes called “Hard Limiters,” because they are a hard limit—the sound doesn’t get louder than the threshold. Compressors work similar to limiters, except rather than a hard limit, the ceiling is “softer”—the volume is turned down, but by a certain percentage, rather than turned down all the way to the limit. This can sound more natural, which is why compressors are used more commonly, with limiters used to control the final output of a mixed signal. Other dynamic effects include gates and expanders, which work similar to limiters and compressors respectively, only in reverse. In these cases, the threshold acts like a “floor,” with the effect turning down any sound that drops below the threshold. This eliminates buzzing and other noise that may be in a system.

Finally, there are advanced dynamic effects, such as ambient noise compensation (or ANC). With ANC, an ambient mic “listens” to the room. The system then automatically adjusts the volume according to the noise level. This ensures people in the room will always hear the loudspeaker clearly, regardless of how noisy the room may be. This is useful in installed sound installations when there isn’t someone available to manually adjust the volume.

Delays

Delay effects have the most diverse applications of any of the audio effects. This is because the simple act of delaying the sound can have a variety of different impacts, depending on how this delay is applied. A basic delay in a digital signal processor does simply that: it delays the sound by some amount (typically measured in milliseconds) that is defined by the user. This is used in many ways in an audio system design and accounts for the physical limitations of how fast sound travels. For example, a system designer can delay sound going to fill speakers at the back of a room by a few milliseconds, thus ensuring the sound hits the listeners’ ears at the same time as the sound coming from the front speakers (that might be delayed slightly due to the speed of sound traveling across the room).

Delay effects have the most diverse applications of any of the audio effects. This is because the simple act of delaying the sound can have a variety of different impacts, depending on how this delay is applied. A basic delay in a digital signal processor does simply that: it delays the sound by some amount (typically measured in milliseconds) that is defined by the user. This is used in many ways in an audio system design and accounts for the physical limitations of how fast sound travels. For example, a system designer can delay sound going to fill speakers at the back of a room by a few milliseconds, thus ensuring the sound hits the listeners’ ears at the same time as the sound coming from the front speakers (that might be delayed slightly due to the speed of sound traveling across the room).

Delayed sound can also be mixed in with the original sound, creating a variety of different effects. A basic echo simply repeats the sound a second time after some element of delay (creating an echoing sound). A chorus (a common guitar effect) is actually just an echo with a very short delay. The sound of the original and delayed sound creates particular phasing issues, giving the chorus its unique sound. Even a reverb is a form of delay, with multiple instances of a delayed sound layered together. This mimics the way sound happens in a real room. Sound is delayed as it bounces off various architectural elements within a room, and so any sound that occurs within a room hits the ear at many different points. The reverb artificially recreates this, using a complicated set of multiple delays mixed together.

Audio Conferencing

There are, of course, a number of other effects besides these. For example, Acoustic Echo Cancelation (AEC) is used for remote conferencing systems, such as web conferencing and teleconferencing. The AEC effect accounts for the echo caused when the sound from a presenter is sent to a distant listener, and then the sound of the presenter is sent back over the phone line from the distant location to the near-side audio system. An AEC effect filters out this second, delayed signal from the sound, cancelling out the echo you would otherwise hear.

Special Effects

Other audio effects you might encounter include flangers, phasers, pitch shifters and similar “special effects” that modulate the audio to create unique sounds. While technologically speaking, chorus effects are delays, they are also considered part of the “special effects” category due to the phasing effect the delayed sound causes. These special effects are used in performance applications, and they are typically found in soundboards and digital effects racks, like the Soundcraft Realtime Rack or as effect pedals and multieffect devices for instruments.

Audio processing is a complicated and deep subject, and there have been entire books written on the subject. However, this hopefully gives you an overview of the different audio processing effects you might encounter and how they’re used.

Are there any audio effects I’ve missed? Let us know in the comments.